On March 6, 2026, the Administration released “President Trump’s Cyber Strategy for America” alongside an Executive Order (entitled “Combating Cybercrime, Fraud, and Predatory Schemes Against American Citizens”) and accompanying Fact Sheet. The framework set forth in the Strategy document is significantly shorter and higher-level than the prior National Cybersecurity Strategy issued in March 2023. We have summarized below the highlights of the Strategy document (Part I) and the Executive Order (Part II), along with key takeaways from each and areas to watch going forward.

Continue Reading White House Releases New National Cyber Strategy and Executive Order

Shayan Karbassi

Shayan Karbassi helps clients across industries navigate complex national security and cybersecurity matters to include government and internal investigations, incident and crisis response, regulatory compliance, and litigation.

As part of his cyber practice, Shayan assists clients with cybersecurity incident response and notification obligations, government and internal investigations of False Claims Act (FCA) issues and insider threats, and compliance with new and evolving federal and state cybersecurity regulations. Shayan also advises U.S. government contractors on security compliance under U.S. national security laws and regulations including, among others, the National Industrial Security Program (NISPOM), Federal Risk and Authorization Management Program (FedRAMP), and other U.S. government cybersecurity regulations.

More broadly, Shayan helps clients navigate potential civil and criminal legal risks stemming from operations in certain high-risk jurisdictions. This includes advising clients on U.S. criminal and civil antiterrorism laws, conducting internal investigations of terrorism-financing and related issues, and litigating Anti-Terrorism Act (ATA) claims.

Shayan maintains an active pro bono litigation practice with a focus on human rights, freedom of information, and free media issues.

Before joining Covington, Shayan served as a member of the U.S. intelligence community, where he routinely provided strategic analysis to the President and other senior U.S. policymakers.

Cybersecurity Information Sharing Act of 2015 Allowed to Sunset

The Cybersecurity Information Sharing Act of 2015 (“CISA 2015”), which provided protections for sharing cybersecurity threat information with the federal government and others, officially sunset on September 30, 2025 pursuant to the law’s original sunset date after efforts to re-authorize it did not succeed. The law created a cybersecurity information…

Continue Reading Cybersecurity Information Sharing Act of 2015 Allowed to SunsetU.S. Tech Legislative, Regulatory & Litigation Update – Second Quarter 2024

This quarterly update highlights key legislative, regulatory, and litigation developments in the second quarter of 2024 related to artificial intelligence (“AI”), connected and automated vehicles (“CAVs”), and data privacy and cybersecurity.

I. Artificial Intelligence

Federal Legislative Developments

- Impact Assessments: The American Privacy Rights Act of 2024 (H.R. 8818, hereinafter “APRA”) was formally introduced in the House by Representative Cathy McMorris Rodgers (R-WA) on June 25, 2024. Notably, while previous drafts of the APRA, including the May 21 revised draft, would have required algorithm impact assessments, the introduced version no longer has the “Civil Rights and Algorithms” section that contained these requirements.

- Disclosures: In April, Representative Adam Schiff (D-CA) introduced the Generative AI Copyright Disclosure Act of 2024 (H.R. 7913). The Act would require persons that create a training dataset that is used to build a generative AI system to provide notice to the Register of Copyrights containing a “sufficiently detailed summary” of any copyrighted works used in the training dataset and the URL for such training dataset, if the dataset is publicly available. The Act would require the Register to issue regulations to implement the notice requirements and to maintain a publicly available online database that contains each notice filed.

- Public Awareness and Toolkits: Certain legislative proposals focused on increasing public awareness of AI and its benefits and risks. For example, Senator Todd Young (R-IN) introduced the Artificial Intelligence Public Awareness and Education Campaign Act (S. 4596), which would require the Secretary of Commerce, in coordination with other agencies, to carry out a public awareness campaign that provides information regarding the benefits and risks of AI in the daily lives of individuals. Senator Edward Markey (D-MA) introduced the Social Media and AI Resiliency Toolkits in Schools Act (S. 4614), which would require the Department of Education and the federal Department of Health and Human Services to develop toolkits to inform students, educators, parents, and others on how AI and social media may impact student mental health.

- Senate AI Working Group Releases AI Roadmap: On May 15, the Bipartisan Senate AI Working Group published a roadmap for AI policy in the United States (the “AI Roadmap”). The AI Roadmap encourages committees to conduct further research on specific issues relating to AI, such as “AI and the Workforce” and “High Impact Uses for AI.” It states that existing laws (concerning, e.g., consumer protection, civil rights) “need to consistently and effectively apply to AI systems and their developers, deployers, and users” and raises concerns about AI “black boxes.” The AI Roadmap also addresses the need for best practices and the importance of having a human in the loop for certain high impact automated tasks.

CISA Issues Notice of Proposed Rulemaking for Critical Infrastructure Cybersecurity Incident Reporting

On March 27, 2024, the U.S. Cybersecurity and Infrastructure Security Agency’s (“CISA”) Notice of Proposed Rulemaking (“Proposed Rule”) related to the Cyber Incident Reporting for Critical Infrastructure Act of 2022 (“CIRCIA”) was released on the Federal Register website. The Proposed Rule, which will be formally published in the Federal Register on April 4, 2024, proposes draft regulations to implement the incident reporting requirements for critical infrastructure entities from CIRCIA, which President Biden signed into law in March 2022. CIRCIA established two cyber incident reporting requirements for covered critical infrastructure entities: a 24-hour requirement to report ransomware payments and a 72-hour requirement to report covered cyber incidents to CISA. While the overarching requirements and structure of the reporting process were established under the law, CIRCIA also directed CISA to issue the Proposed Rule within 24 months of the law’s enactment to provide further detail on the scope and implementation of these requirements. Under CIRCIA, the final rule must be published by September 2025.

The Proposed Rule addresses various elements of CIRCIA, which will be covered in a forthcoming Client Alert. This blog post focuses primarily on the proposed definitions of two pivotal terms that were left to further rulemaking under CIRCIA (Covered Entity and Covered Cyber Incident), which illustrate the broad scope of CIRCIA’s reporting requirements, as well as certain proposed exceptions to the reporting requirements. The Proposed Rule will be subject to a review and comment period for 60 days after publication in the Federal Register.

Covered Entities

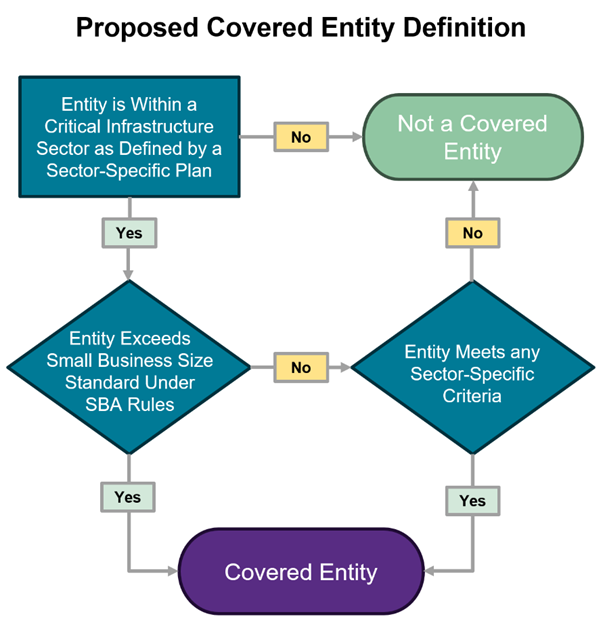

CIRCIA broadly defined “Covered Entity” to include entities that are in one of the 16 critical infrastructure sectors established under Presidential Policy Directive 21 (“PPD-21”) and directed CISA to develop a more comprehensive definition in subsequent rulemaking. Accordingly, the Proposed Rule (1) addresses how to determine whether an entity is “in” one of the 16 sectors and (2) proposed two additional criteria for the Covered Entity definition, either of which must be met in order for an entity to be covered. Notably, the Proposed Rule’s definition of Covered Entity would encompass the entire corporate entity, even if only a constituent part of its business or operations meets the criteria. Thus, Covered Cyber Incidents experienced by a Covered Entity would be reportable regardless of which part of the organization suffered the impact. In total, CISA estimates that over 300,000 entities would be covered by the Proposed Rule.

Decision tree that demonstrates the overarching elements of the Covered Entity definition. For illustrative purposes only.

Continue Reading CISA Issues Notice of Proposed Rulemaking for Critical Infrastructure Cybersecurity Incident ReportingU.S. Tech Legislative, Regulatory & Litigation Update – Fourth Quarter 2023

This quarterly update highlights key legislative, regulatory, and litigation developments in the fourth quarter of 2023 and early January 2024 related to technology issues. These included developments related to artificial intelligence (“AI”), connected and automated vehicles (“CAVs”), data privacy, and cybersecurity. As noted below, some of these developments provide companies with the opportunity for participation and comment.

I. Artificial Intelligence

Federal Executive Developments on AI

The Executive Branch and U.S. federal agencies had an active quarter, which included the White House’s October 2023 release of the Executive Order (“EO”) on Safe, Secure, and Trustworthy Artificial Intelligence. The EO declares a host of new actions for federal agencies designed to set standards for AI safety and security; protect Americans’ privacy; advance equity and civil rights; protect vulnerable groups such as consumers, patients, and students; support workers; promote innovation and competition; advance American leadership abroad; and effectively regulate the use of AI in government. The EO builds on the White House’s prior work surrounding the development of responsible AI. Concerning privacy, the EO sets forth a number of requirements for the use of personal data for AI systems, including the prioritization of federal support for privacy-preserving techniques and strengthening privacy-preserving research and technologies (e.g., cryptographic tools). Regarding equity and civil rights, the EO calls for clear guidance to landlords, Federal benefits programs, and Federal contractors to keep AI systems from being used to exacerbate discrimination. The EO also sets out requirements for developers of AI systems, including requiring companies developing any foundation model “that poses a serious risk to national security, national economic security, or national public health and safety” to notify the federal government when training the model and provide results of all red-team safety tests to the government.

Federal Legislative Activity on AI

Congress continued to evaluate AI legislation and proposed a number of AI bills, though none of these bills are expected to progress in the immediate future. For example, members of Congress continued to hold meetings on AI and introduced bills related to deepfakes, AI research, and transparency for foundational models.

- Deepfakes and Inauthentic Content: In October 2023, a group of bipartisan senators released a discussion draft of the NO FAKES Act, which would prohibit persons or companies from producing an unauthorized digital replica of an individual in a performance or hosting unauthorized digital replicas if the platform has knowledge that the replica was not authorized by the individual depicted.

- Research: In November 2023, Senator Thune (R-SD), along with five bipartisan co-sponsors, introduced the Artificial Intelligence Research, Innovation, and Accountability Act (S. 3312), which would require covered internet platforms that operate generative AI systems to provide their users with clear and conspicuous notice that the covered internet platform uses generative AI.

- Transparency for Foundational Models: In December 2023, Representative Beyer (D-VA-8) introduced the AI Foundation Model Act (H.R. 6881), which would direct the Federal Trade Commission (“FTC”) to establish transparency standards for foundation model deployers in consultation with other agencies. The standards would require companies to provide consumers and the FTC with information on a model’s training data and mechanisms, as well as information regarding whether user data is collected in inference.

- Bipartisan Senate Forums: Senator Schumer’s (D-NY) AI Insight Forums, which are a part of his SAFE Innovation Framework, continued to take place this quarter. As part of these forums, bipartisan groups of senators met multiple times to learn more about key issues in AI policy, including privacy and liability, long-term risks of AI, and national security.

U.S. Tech Legislative & Regulatory Update – Second Quarter 2023

This quarterly update summarizes key legislative and regulatory developments in the second quarter of 2023 related to key technologies and related topics, including Artificial Intelligence (“AI”), the Internet of Things (“IoT”), connected and automated vehicles (“CAVs”), data privacy and cybersecurity, and online teen safety.

Artificial Intelligence

AI continued to be an area of significant interest of both lawmakers and regulators throughout the second quarter of 2023. Members of Congress continue to grapple with ways to address risks posed by AI and have held hearings, made public statements, and introduced legislation to regulate AI. Notably, Senator Chuck Schumer (D-NY) revealed his “SAFE Innovation framework” for AI legislation. The framework reflects five principles for AI – security, accountability, foundations, explainability, and innovation – and is summarized here. There were also a number of AI legislative proposals introduced this quarter. Some proposals, like the National AI Commission Act (H.R. 4223) and Digital Platform Commission Act (S. 1671), propose the creation of an agency or commission to review and regulate AI tools and systems. Other proposals focus on mandating disclosures of AI systems. For example, the AI Disclosure Act of 2023 (H.R. 3831) would require generative AI systems to include a specific disclaimer on any outputs generated, and the REAL Political Advertisements Act (S. 1596) would require political advertisements to include a statement within the contents of the advertisement if generative AI was used to generate any image or video footage. Additionally, Congress convened hearings to explore AI regulation this quarter, including a Senate Judiciary Committee Hearing in May titled “Oversight of A.I.: Rules for Artificial Intelligence.”

There also were several federal Executive Branch and regulatory developments focused on AI in the second quarter of 2023, including, for example:

- White House: The White House issued a number of updates on AI this quarter, including the Office of Science and Technology Policy’s strategic plan focused on federal AI research and development, discussed in greater detail here. The White House also requested comments on the use of automated tools in the workplace, including a request for feedback on tools to surveil, monitor, evaluate, and manage workers, described here.

- CFPB: The Consumer Financial Protection Bureau (“CFPB”) issued a spotlight on the adoption and use of chatbots by financial institutions.

- FTC: The Federal Trade Commission (“FTC”) continued to issue guidance on AI, such as guidance expressing the FTC’s view that dark patterns extend to AI, that generative AI poses competition concerns, and that tools claiming to spot AI-generated content must make accurate disclosures of their abilities and limitations.

- HHS Office of National Coordinator for Health IT: This quarter, the Department of Health and Human Services (“HHS”) released a proposed rule related to certified health IT that enables or interfaces with “predictive decision support interventions” (“DSIs”) that incorporate AI and machine learning technologies. The proposed rule would require the disclosure of certain information about predictive DSIs to enable users to evaluate DSI quality and whether and how to rely on the DSI recommendations, including a description of the development and validation of the DSI. Developers of certified health IT would also be required to implement risk management practices for predictive DSIs and make summary information about these practices publicly available.

SEC Delays Cybersecurity Rules

Earlier this week, the Securities and Exchange Commission (“SEC”) published an update to its rulemaking agenda indicating that it does not plan to approve two proposed cyber rules until at least October 2023 (the agenda’s timeframe is an estimate). The proposed rules in question address disclosure requirements regarding cybersecurity governance…

Continue Reading SEC Delays Cybersecurity RulesFERC Orders Development of New Internal Network Security Monitoring Standards

The Federal Energy Regulatory Commission (“FERC”) issued a final rule (Order No. 887) directing the North American Electric Reliability Corporation (“NERC”) to develop new or modified Reliability Standards that require internal network security monitoring (“INSM”) within Critical Infrastructure Protection (“CIP”) networked environments. This Order may be of interest to entities that develop, implement, or maintain hardware or software for operational technologies associated with bulk electric systems (“BES”).

The forthcoming standards will only apply to certain high- and medium-impact BES Cyber Systems. The final rule also requires NERC to conduct a feasibility study for implementing similar standards across all other types of BES Cyber Systems. NERC must propose the new or modified standards within 15 months of the effective date of the final rule, which is 60 days after the date of publication in the Federal Register.

Background

According to the FERC news release, the 2020 global supply chain attack involving the SolarWinds Orion software demonstrated how attackers can “bypass all network perimeter-based security controls traditionally used to identify malicious activity and compromise the networks of public and private organizations.” Thus, FERC determined that current CIP Reliability Standards focus on prevention of unauthorized access at the electronic security perimeter and that CIP-networked environments are thus vulnerable to attacks that bypass perimeter-based security controls. The new or modified Reliability Standards (“INSM Standards”) are intended to address this gap by requiring responsible entities to employ INSM in certain BES Cyber Systems. INSM is a subset of network security monitoring that enables continuing visibility over communications between networked devices that are in the so-called “trust zone,” a term which generally describes a discrete and secure computing environment. For purposes of the rule, the trust zone is any CIP-networked environment. In addition to continuous visibility, INSM facilitates the detection of malicious and anomalous network activity to identify and prevent attacks in progress. Examples provided by FERC of tools that may support INSM include anti-malware, intrusion detection systems, intrusion prevention systems, and firewalls.

Continue Reading FERC Orders Development of New Internal Network Security Monitoring Standards